Low-level Visual Properties

In addition to semantic prototypes, ProtoSim also discovers prototypes which capture low-level visual properties, such as motion blur and a shallow depth-of-field.

Dataset summarisation is a fruitful approach to dataset inspection. However, when applied to a single dataset the discovery of visual concepts is restricted to those most prominent. We argue that a comparative approach can expand upon this paradigm to enable richer forms of dataset inspection that go beyond the most prominent concepts.

To enable dataset comparison we present a module that learns concept-level prototypes across datasets. We leverage self-supervised learning to discover these prototypes without supervision, and we demonstrate the benefits of our approach in two case-studies. Our findings show that dataset comparison extends dataset inspection and we hope to encourage more works in this direction.

In addition to semantic prototypes, ProtoSim also discovers prototypes which capture low-level visual properties, such as motion blur and a shallow depth-of-field.

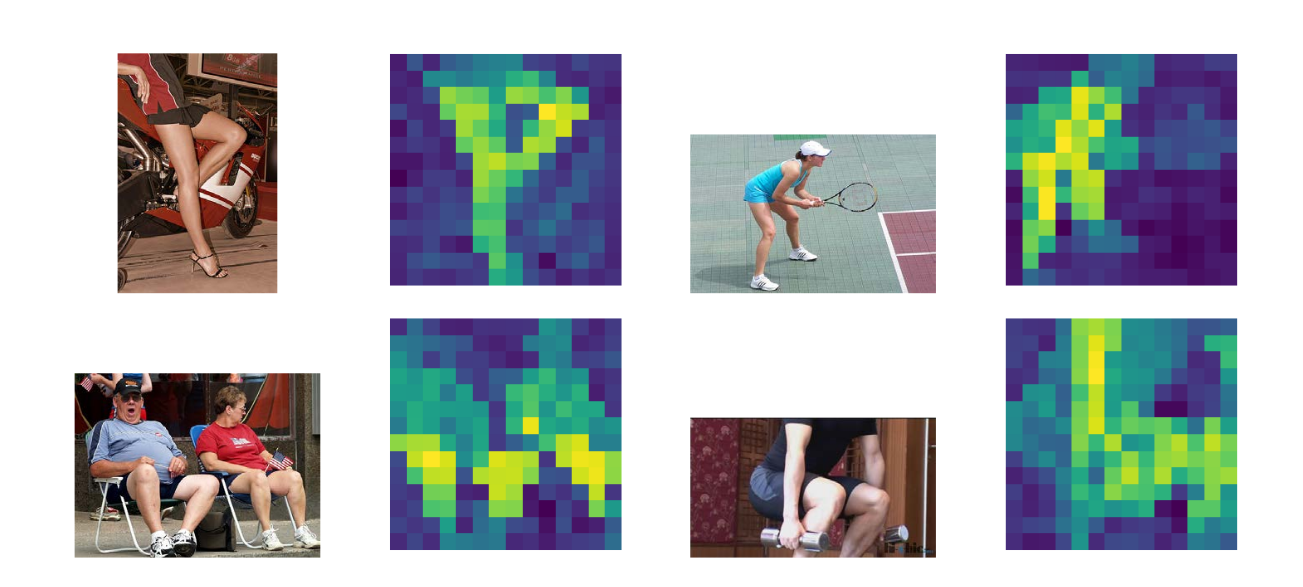

By visualising the attention maps we can show in which parts of the image the prototype is found.

@inproceedings{vannoord2023protosim,

author = {{van Noord}, Nanne},

title = {Prototype-based Dataset Comparison},

booktitle = {ICCV},

year = {2023},

}